Setting up a local AI server on a mini PC is a game-changer in 2026. With cloud services raising privacy concerns and costs, running AI models locally gives you full control, low latency, and enhanced security. Whether you are a cybersecurity expert testing threat detection, a student experimenting with machine learning projects, a gamer optimizing AI-driven tools, or building an AI server for home use, a mini PC offers compact power. We will walk you through everything from hardware checks to running models like Llama or Mistral.

This guide is perfect if you want offline AI for tasks like natural language processing, image generation, or code assistance without relying on external APIs. Mini PCs with modern NPUs (Neural Processing Units), ample DDR5 RAM, and NVMe SSDs make it feasible even on compact hardware. Expect blazing-fast inference speeds while keeping your data secure with features like TPM 2.0.

Explore more insights on our blog for the latest in cybersecurity hardware and AI servers.

Preparation

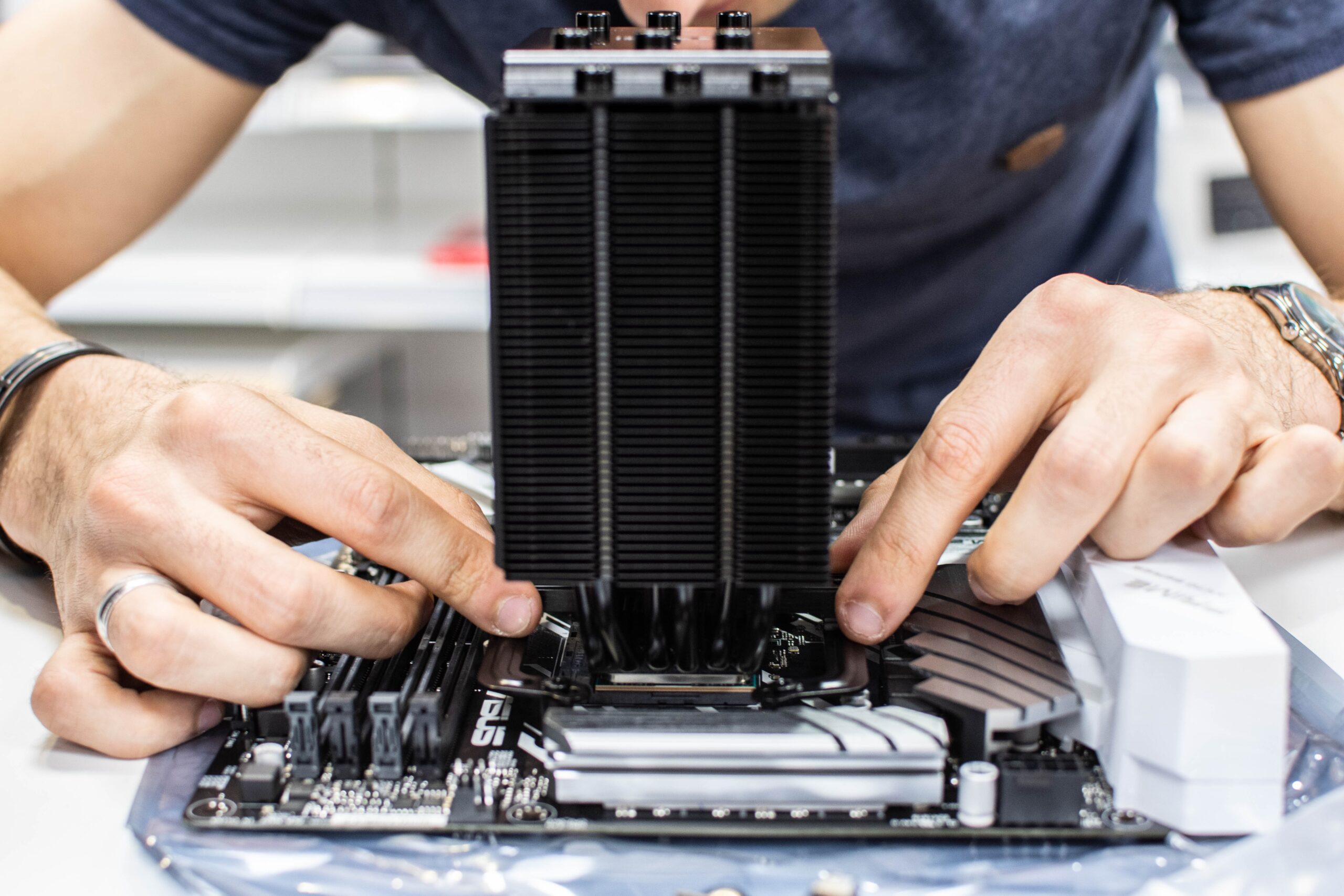

Before diving in, ensure your mini PC meets the basics for a smooth local AI server setup. You need at least an Intel Core Ultra or AMD Ryzen with NPU support for AI acceleration, 16GB DDR5 RAM (32GB ideal), and a 512GB NVMe SSD for models and datasets. Wi-Fi 6E helps with any network tweaks, and good cooling prevents throttling during heavy loads.

Download essential tools: Ubuntu 24.04 LTS ISO (recommended for stability), a USB boot drive creator like Rufus, and backups of your data. Disable Secure Boot in BIOS if needed, and verify TPM 2.0 is enabled for secure key storage. Test your mini PC’s power supply; AI workloads can draw 65W+.

- Hardware: NPU-equipped CPU, 16GB+ RAM, NVMe SSD

- Software: Ubuntu ISO, Docker, Ollama

- Tools: USB drive, screwdriver for any upgrades

Step-by-Step Setup Guide

Follow these 8 detailed steps to get your local AI server running. We use Ubuntu and Ollama for simplicity, but principles apply to Windows or other frameworks like LocalAI.

- Create a bootable USB: Download Ubuntu 24.04 ISO from the official site. Use Rufus or Etcher to make a bootable drive. Insert into your mini PC, enter BIOS (usually F2 or Del), set USB as first boot device, and save.

- Install Ubuntu: Boot from USB, select “Try Ubuntu” first to test hardware. Install with full disk encryption for cybersecurity. Create a user account, set a strong password, and reboot.

- Update the system: Open terminal (Ctrl+Alt+T), run

sudo apt update && sudo apt upgrade -y. Install essentials:sudo apt install curl git build-essential -y. Reboot. - Install Docker: Add Docker repo:

curl -fsSL https://get.docker.com -o get-docker.sh && sudo sh get-docker.sh. Add user to docker group:sudo usermod -aG docker $USER. Log out and back in. - Install Ollama: Run

curl -fsSL https://ollama.com/install.sh | sh. Verify withollama --version. This handles AI model serving efficiently. - Pull and run a model: Start with a lightweight model:

ollama pull llama3.2. Run it:ollama run llama3.2. Test by chatting in terminal. - Set up web UI (Open WebUI): Pull image:

docker run -d -p 3000:8080 --add-host=host.docker.internal:host-gateway -v open-webui:/app/backend/data -e OLLAMA_BASE_URL=http://host.docker.internal:11434 --name open-webui --restart always ghcr.io/open-webui/open-webui:main. Access at http://localhost:3000. - Secure the server: Enable UFW firewall:

sudo ufw enable && sudo ufw allow 11434/tcp && sudo ufw allow 3000/tcp. Use SSH keys, not passwords. Set up fail2ban for brute-force protection.

Optimization Tips

Maximize your mini PC’s AI performance with these 7 tips tailored for cybersecurity, gaming, and student use.

- Use quantized models (e.g., 4-bit) to fit larger LLMs in 16GB RAM.

- Enable NPU acceleration if your CPU supports it via Ollama’s ROCm or OpenVINO plugins.

- Monitor temps with

lm-sensorsand add a cooling pad; aim under 80C. - Offload to NVMe SSD caching for faster model loading.

- Batch requests in web UI for gaming AI or cybersecurity simulations.

- Integrate with tools like LangChain for advanced workflows.

- Schedule auto-updates but test in a VM first for stability.

Troubleshooting

Common issues and fixes:

- Out of memory: Use smaller models or add swap:

sudo fallocate -l 16G /swapfile && sudo chmod 600 /swapfile && sudo mkswap /swapfile && sudo swapon /swapfile. - Docker permission error: Ensure user in docker group and relog.

- Model pull fails: Check internet; use

ollama pull --insecureif proxy issues. - High CPU usage: Enable GPU/NPU: Install ROCm for AMD or CUDA for NVIDIA if present.

- WebUI not loading: Verify ports open:

sudo netstat -tuln | grep 3000. - Slow inference: Close background apps, update BIOS for better power management.

Final Thoughts

Your mini PC is now a powerful local AI server! This setup empowers cybersecurity tasks like anomaly detection, student projects in AI ethics, gaming mods with AI, or home servers. Enjoy privacy, speed, and scalability. As AI evolves in 2026, revisit for updates. Share your experiences in comments.

FAQs

Can any mini PC run a local AI server?

No, opt for ones with NPU, 16GB+ RAM, and NVMe. Intel NUC or Beelink models work great.

Is Ubuntu better than Windows for this?

Yes for efficiency and Docker support, but Windows with WSL2 is viable for familiarity.

How much power does it consume?

Idle: 10-20W, under load: 50-100W depending on model and hardware.

Can I access it remotely securely?

Yes, use Tailscale VPN or WireGuard with UFW rules and key auth.

What AI models should I start with?

Llama 3.2 or Phi-3 for balance of speed and capability on mini PCs.

Write Your Review

No reviews yet. Be the first to share your experience!